Data Security: encoding, encryption, hashing

#datasecurity #cybersecurity #security #encoding #encryption #hashing

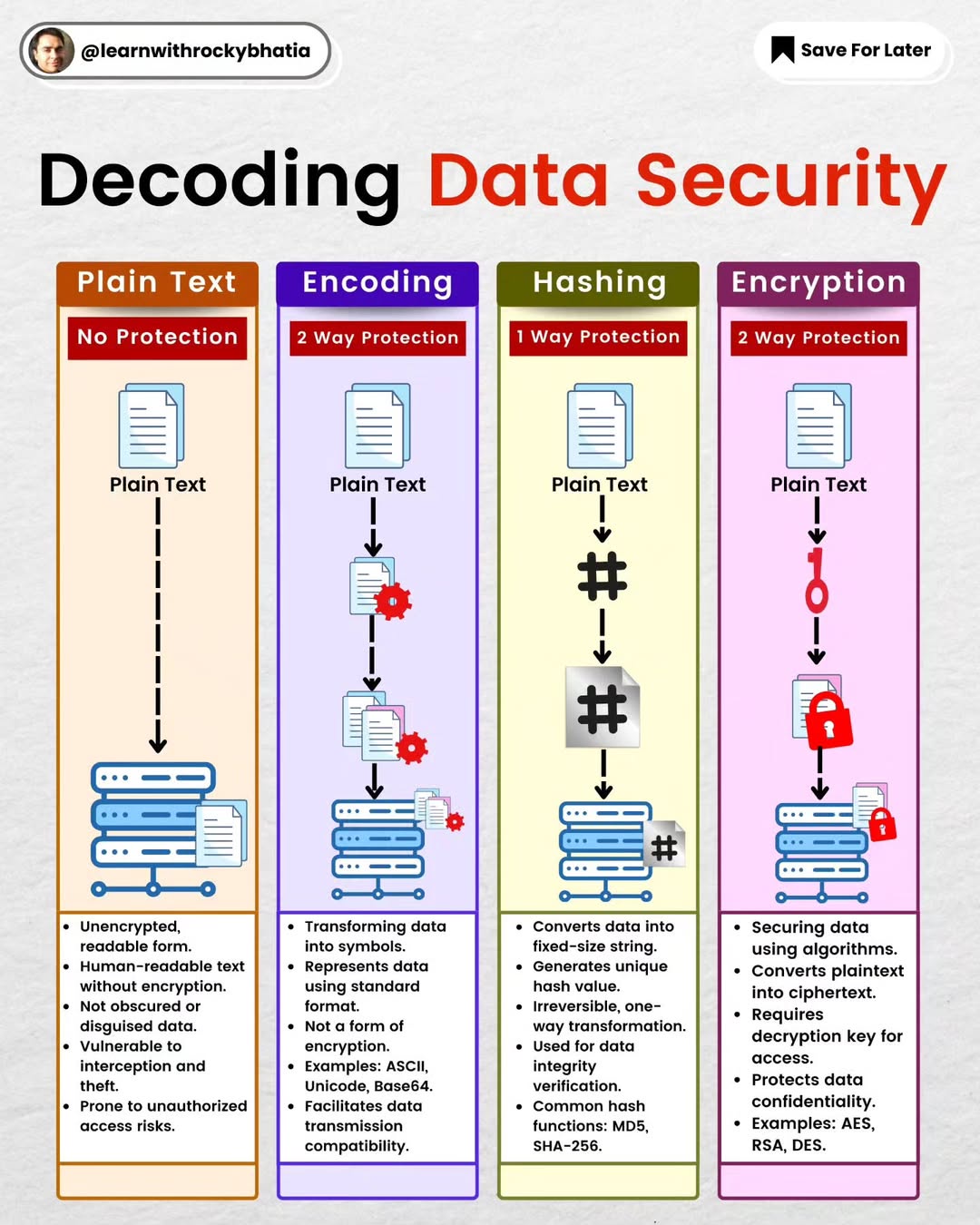

Have you ever found yourself scratching your head trying to figure out the differences between encoding, encryption, and hashing? Well, you're not alone. Let me break it down for you, minus the heavy tech lingo.

Encoding: Your Data's Passport

Think of encoding like giving your data a passport to travel internationally. It's all about converting data into a format that can be easily shared across different systems without confusion. Whether it's Base64, ASCII, or Unicode, encoding ensures that your message arrives intact, no matter where it's headed. Remember, encoding isn't about keeping secrets; it's about making sure your data can be understood anywhere and by anyone it's meant for.

Encryption: The Secret Agent

Now, if encoding is your data's passport, encryption is its secret agent disguise. When you encrypt data, you're scrambling it into a code that only someone with the right key can crack. It's the ultimate protection for your sensitive information, ensuring that only the intended recipient can see your message in its true form. Whether you're sending credit card info, private messages, or sensitive documents, encryption keeps your secrets safe from prying eyes.

Hashing: The One-Way Mirror

Hashing is a bit like a one-way mirror. It transforms your data into a fixed-size string or a “fingerprint,” but here's the kicker: you can't reverse the process. It's fantastic for checking if data has been tampered with or keeping passwords secure. If the data changes even a little bit, the hash will be completely different. It's a one-way trip – once your data is hashed, there's no going back.

Why This Matters to You Grasping these concepts is key in our digital age, especially if you're dabbling in digital communications, cybersecurity, or just want to keep your online presence safe. Each of these processes has its role, whether it's ensuring your data can travel safely, keeping your information private, or verifying that what you're seeing hasn't been messed with.

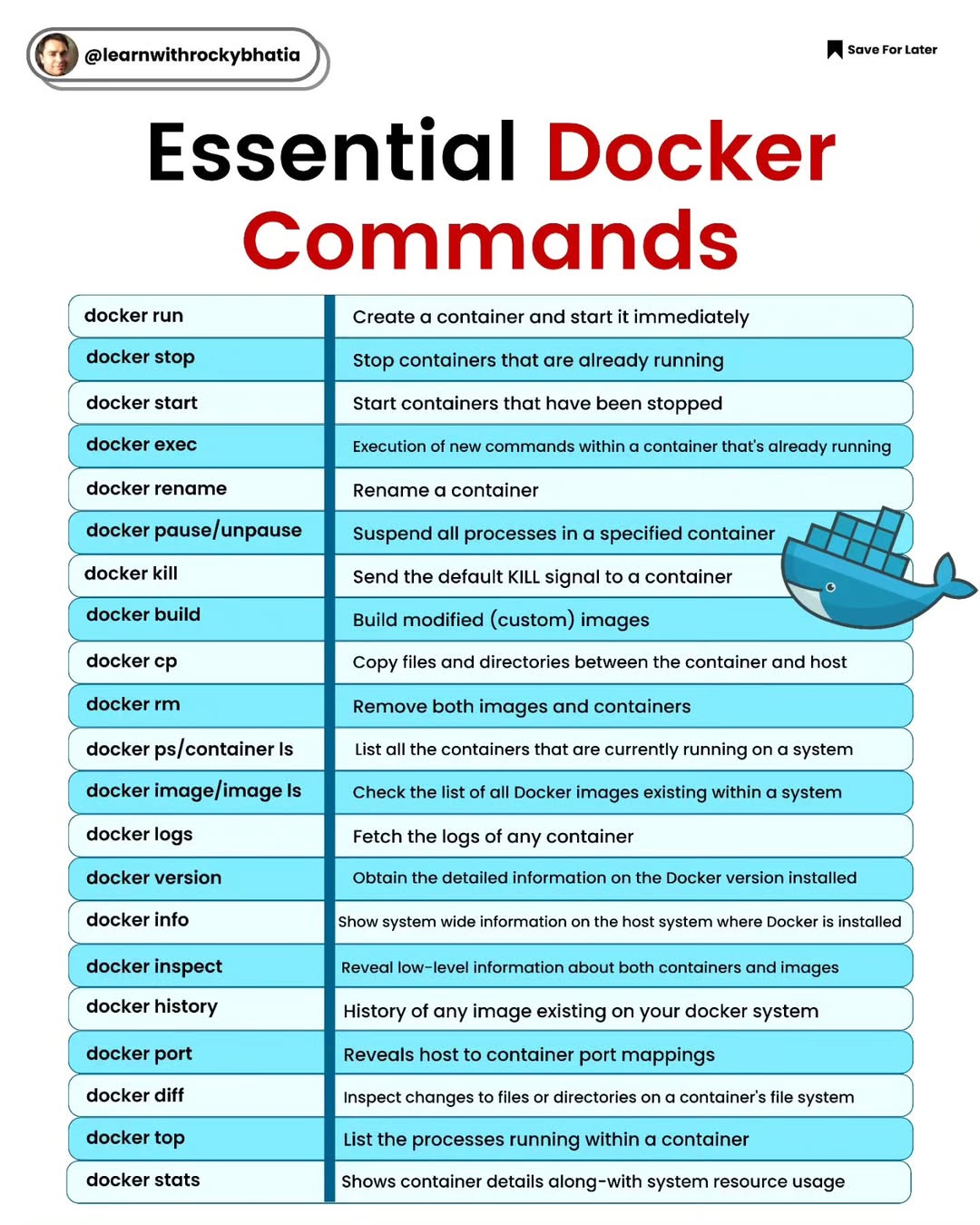

Docker Commands

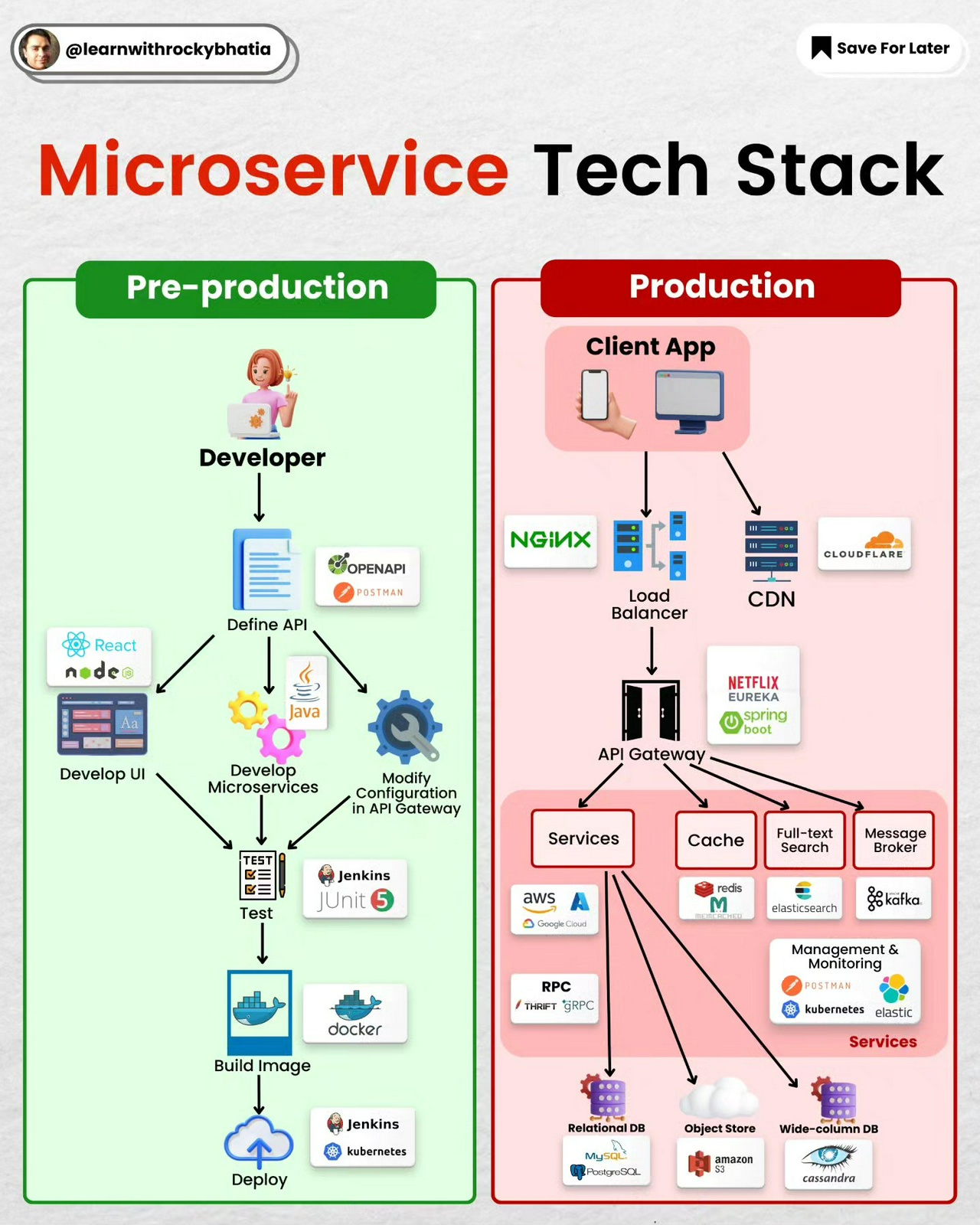

Microservice Tech Stack

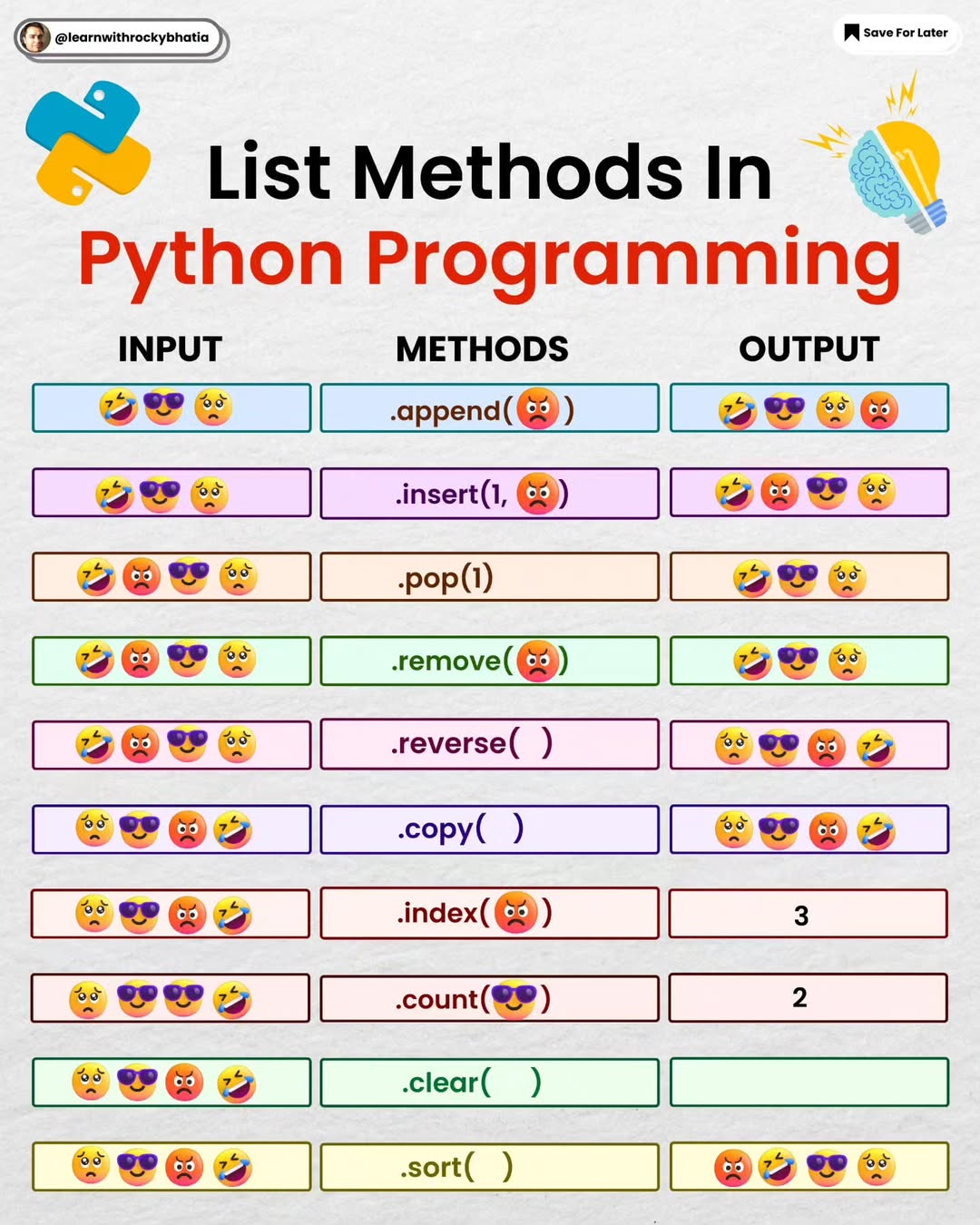

Python List Methods

API vs SDK

API vs. SDK: What Every Developer Should Know

APIs (Application Programming Interfaces) and SDKs (Software Development Kits) are essential tools in a developer's toolkit, but they serve different purposes.

API: Think of it as a contract that allows one software application to interact with another. It’s like a menu in a restaurant – you ask for what you need, and the kitchen (backend) provides it. APIs enable seamless integration and communication between different systems, enhancing functionality without needing to understand the underlying code.

SDK: This is more like a full-fledged toolbox. It includes APIs, documentation, libraries, and sample code to help you build applications for specific platforms. An SDK provides everything needed to create software, making development faster and more efficient.

In essence, while an API is a bridge for communication, an SDK is a complete development environment. Both are crucial, but understanding their distinct roles can significantly enhance your development process.

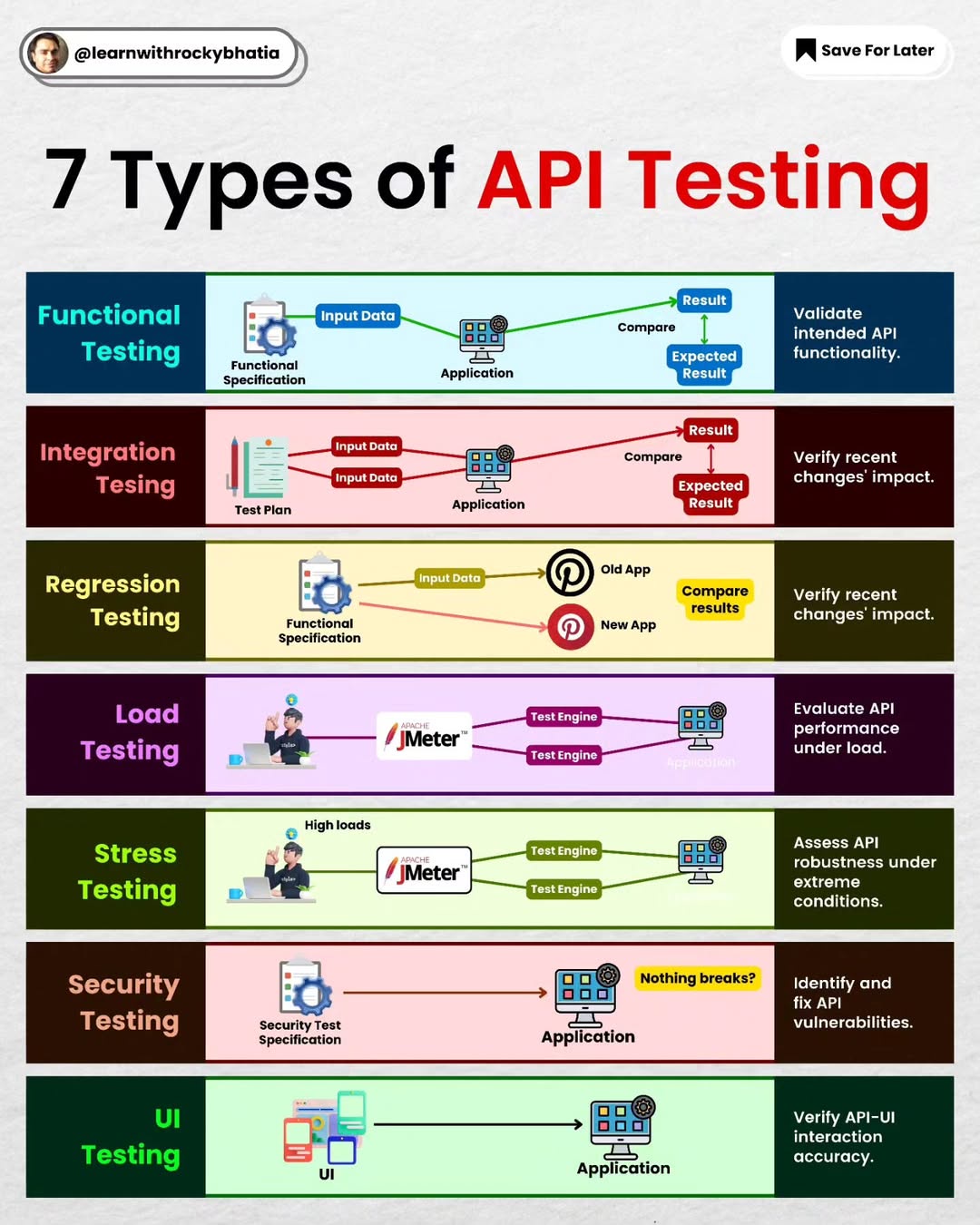

API Testing

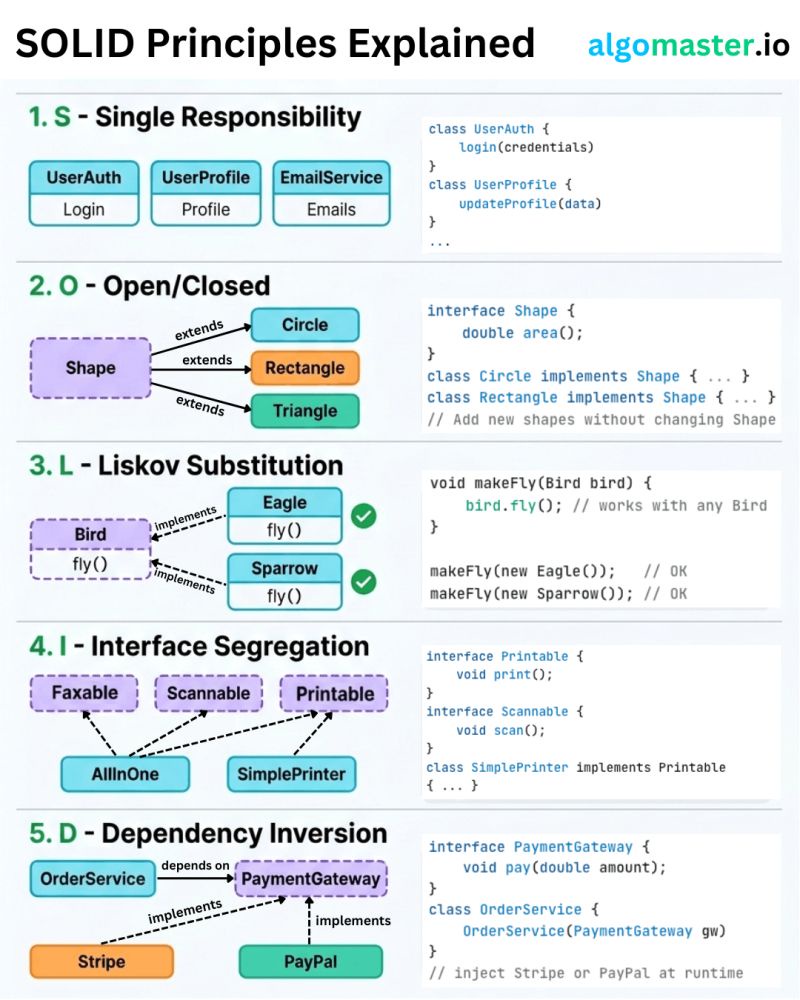

SOLID Principles

#solid #designprinciples #designpatterns #systemdesign

𝐒 – 𝐒𝐢𝐧𝐠𝐥𝐞 𝐑𝐞𝐬𝐩𝐨𝐧𝐬𝐢𝐛𝐢𝐥𝐢𝐭𝐲 𝐏𝐫𝐢𝐧𝐜𝐢𝐩𝐥𝐞 A class should have only one reason to change. - Example: Instead of one giant User class that handles authentication, profile updates, and sending emails, split it into UserAuth, UserProfile, and EmailService.

𝐎 – 𝐎𝐩𝐞𝐧/𝐂𝐥𝐨𝐬𝐞𝐝 𝐏𝐫𝐢𝐧𝐜𝐢𝐩𝐥𝐞 Classes should be open for extension but closed for modification. - Example: Define a Shape interface with an area() method. When you need a new shape, just add a Circle or Triangle class that implements it.

𝐋 – 𝐋𝐢𝐬𝐤𝐨𝐯 𝐒𝐮𝐛𝐬𝐭𝐢𝐭𝐮𝐭𝐢𝐨𝐧 𝐏𝐫𝐢𝐧𝐜𝐢𝐩𝐥𝐞 Subtypes must be substitutable for their base types without breaking behavior. - Example: If Bird has a fly() method, then Eagle and Sparrow should both work anywhere a Bird is expected.

𝐈 – 𝐈𝐧𝐭𝐞𝐫𝐟𝐚𝐜𝐞 𝐒𝐞𝐠𝐫𝐞𝐠𝐚𝐭𝐢𝐨𝐧 𝐏𝐫𝐢𝐧𝐜𝐢𝐩𝐥𝐞 Don't force classes to implement interfaces they don't use. - Example: Instead of one fat Machine interface with print(), scan(), and fax(), break it into Printable, Scannable, and Faxable. A SimplePrinter only implements Printable.

𝐃 – 𝐃𝐞𝐩𝐞𝐧𝐝𝐞𝐧𝐜𝐲 𝐈𝐧𝐯𝐞𝐫𝐬𝐢𝐨𝐧 𝐏𝐫𝐢𝐧𝐜𝐢𝐩𝐥𝐞 High-level modules should not depend on low-level modules. Both should depend on abstractions. - Example: Your OrderService should depend on a PaymentGateway interface, not directly on Stripe or PayPal.

The real power of SOLID is not in following each principle in isolation. It's in how they work together to make your code easier to change, test, and extend.

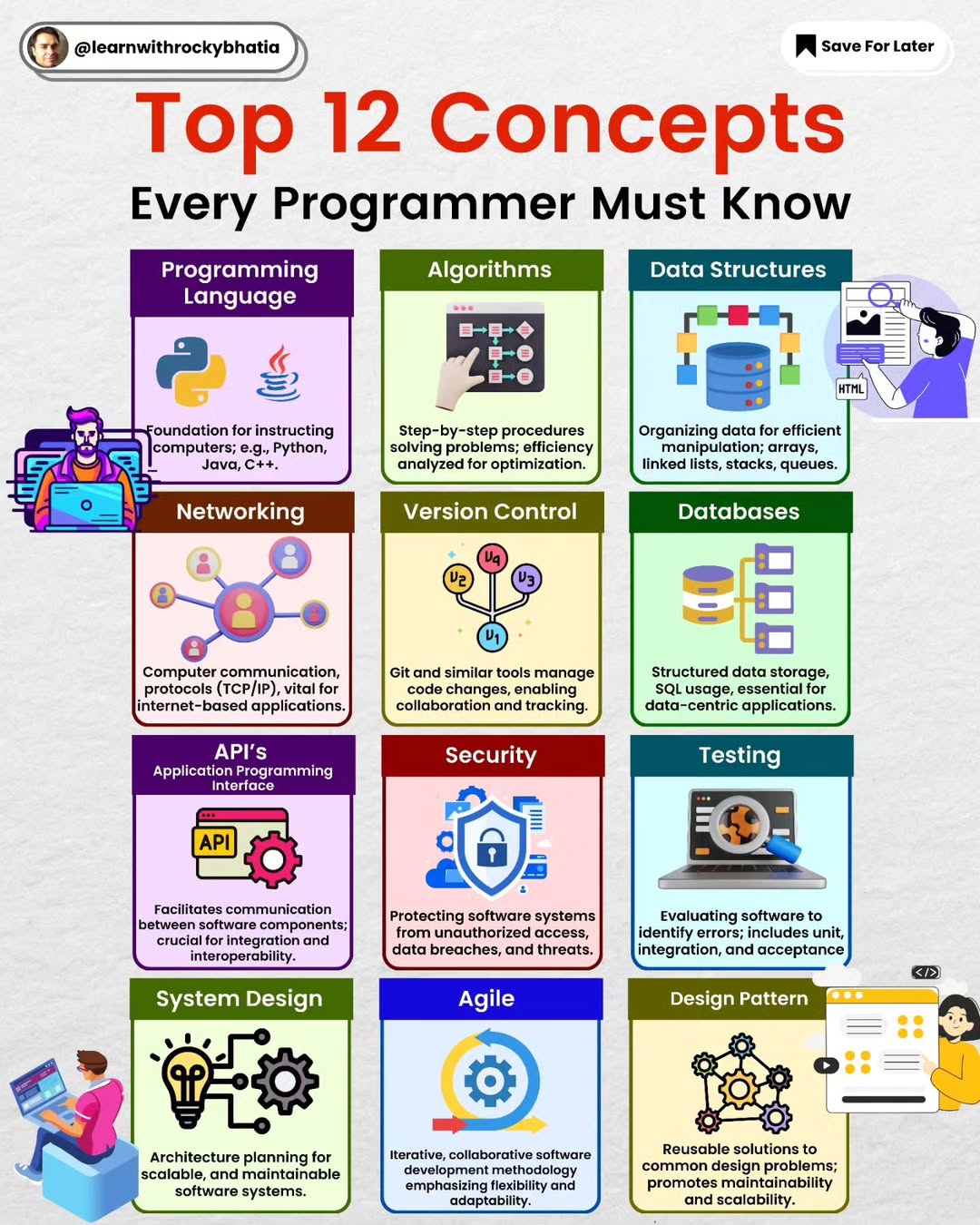

12 Essential Programmer Concepts

#programmingconcepts #systemdesign #security #coding #datastructures #algorithms #networking #versioncontrol #git #databases #api #agile

These comprehensive set of concepts forms a strong foundation for programmers, covering a range of skills from programming fundamentals to system design and security considerations.

1. Introduction to Programming Languages: A foundational understanding of at least one programming language (e.g., Python, Java, C++), enabling the ability to comprehend and switch between languages as needed.

2. Data Structures Mastery: Proficiency in fundamental data structures such as arrays, linked lists, stacks, queues, trees, and graphs, essential for effective algorithmic problem solving.

3. Algorithms Proficiency: Familiarity with common algorithms and problem solving techniques, including sorting, searching, and dynamic programming, to optimise code efficiency. ** 4. Database Systems Knowledge:** Understanding of database systems, covering relational databases (e.g., SQL) and NoSQL databases (e.g., MongoDB), crucial for efficient data storage and retrieval.

5. Version Control Mastery: Proficiency with version control systems like Git, encompassing skills in branching, merging, and collaboration workflows for effective team development.

6. Agile Methodology Understanding: Knowledge of the Agile Software Development Life Cycle (Agile SDLC) principles, emphasizing iterative development, Scrum, and Kanban for adaptable project management.

7. Web Development Basics (Networking): Fundamental understanding of networking concepts, including protocols, IP addressing, and HTTP, essential for web development and communication between systems.

8. APIs (Application Programming Interfaces) Expertise: Understanding how to use and create APIs, critical for integrating different software systems and enabling seamless communication between applications.

9. Testing and Debugging Skills: Proficiency in testing methodologies, unit testing, and debugging techniques to ensure code quality and identify and fix errors effectively.

10. Design Patterns Familiarity: Knowledge of common design patterns in object-oriented programming, aiding in solving recurring design problems and enhancing code maintainability.

11. System Design Principles: Understanding of system design, including architectural patterns, scalability, and reliability, to create robust and efficient software systems.

12. Security Awareness: Fundamental knowledge of security principles, including encryption, authentication, and best practices for securing applications and data.

Other areas could be OS, containers, concurrency and parallelism , basic web development etc.

API Architectural Styles

#api #architecturalstyles #soap #rest #graphql #grpc #websocket #webhook

𝐓𝐨𝐩 𝟔 𝐀𝐏𝐈 𝐚𝐫𝐜𝐡𝐢𝐭𝐞𝐜𝐭𝐮𝐫𝐞 𝐒𝐭𝐲𝐥𝐞𝐬

APIs serve as the backbone of modern software development, enabling seamless integration and communication between various components. Understanding the different API architecture styles is crucial for choosing the most suitable approach for your project. Below are the top six API architecture styles along with their recommended use cases:

1️⃣ SOAP (Simple Object Access Protocol): SOAP is ideal for enterprise-level applications that require a standardized protocol for exchanging structured information. Its robust features include strong typing and advanced security mechanisms, making it suitable for complex and regulated environments.

2️⃣ RESTful (Representational State Transfer): RESTful APIs prioritize simplicity and scalability, making them well-suited for web services, particularly those catering to public-facing applications. With a stateless, resource-oriented design, RESTful APIs facilitate efficient communication between clients and servers.

3️⃣ GraphQL: GraphQL shines in scenarios where flexibility and client-driven data retrieval are paramount. By allowing clients to specify the exact data they need, GraphQL minimizes over-fetching and under-fetching, resulting in optimized performance and reduced network traffic.

4️⃣ gRPC: For high-performance, low-latency communication, gRPC emerges as the preferred choice. Widely adopted in microservices architectures, gRPC offers efficient data serialization and bi-directional streaming capabilities, making it suitable for real-time applications and distributed systems.

5️⃣ WebSockets: WebSockets excel in applications requiring real-time, bidirectional communication, such as chat platforms and online gaming. By establishing a persistent connection between clients and servers, WebSockets enable instant data updates and seamless interaction experiences.

6️⃣ Webhooks: In event-driven systems, webhooks play a vital role by allowing applications to react to specific events in real-time. Whether it's notifying about data updates or triggering actions based on user activities, webhooks facilitate seamless integration and automation.

Selecting the appropriate API style is crucial for optimising your application's performance and enhancing user experience. By understanding the strengths and use cases of each architecture style, you can make informed decisions that align with your project's specific requirements.